Neural Circuits 🧠⚡️👨🏽🔬

I’ve been getting into Neuroscience a lot recently and I've been watching an inordinate amount of TED talks, and a whole buncha lectures about the brain and the circuitry with which it operates.

One lecture series I’ve been particularly fascinated by is the series offered by Neuroscience Online, an open-access sort of e-Textbook provided by the faculties at University of Texas Health Center, McGovern Medical School.

The website might look a little dated but the wealth of information available on this topic is great. Not to mention the slides and the Adobe Flash animations are hilarious (but still informative). Prof. Byrne is very engaging to listen to and his explanations are easy enough to grasp by someone like me, who almost flunked Biology in High School.

The first lecture talks about the neurons and the different types of circuits these neurons form to pass and receive signals which ultimately form the “neural highway” or the “information highway” that is the backbone of our Central Nervous System(CNS). Prof. Byrne explains how neurons transmit these electrical impulses by virtue of a minimum threshold ( the acting potential ) of voltage required for an “excitation”.

It’s fascinating to get behind the idea that these billions of neurons operate on Electrical Voltage transmission like a switch or other electrical systems we find around us. And especially so, if you were someone of my kind who had a naive view of the sciences as being very distinctly separate and “no possible way that they’ll be interlinked right?”.

But the idea of viewing neurons and synapses as switches in circuits and electrical connections gives us the ability to extend our understanding of how they operate. Some neurons act as Excitators wherein they charge up and activate other neurons connected to them and some neurons act as Inhibitors wherein they charge up and deactivate other neurons. A connected bunch of these “Excitator” and “Inhibitor” neurons form a micronetwork.

An example would be

- an excitator neuron activating the bicep tendon to flex and show off dem gunz

- while an inhibitor neuron could deactivate the muscles around your mouth to close it off when offered Pepsi instead of Coke. (

it’s not even a contest don’t @ me)

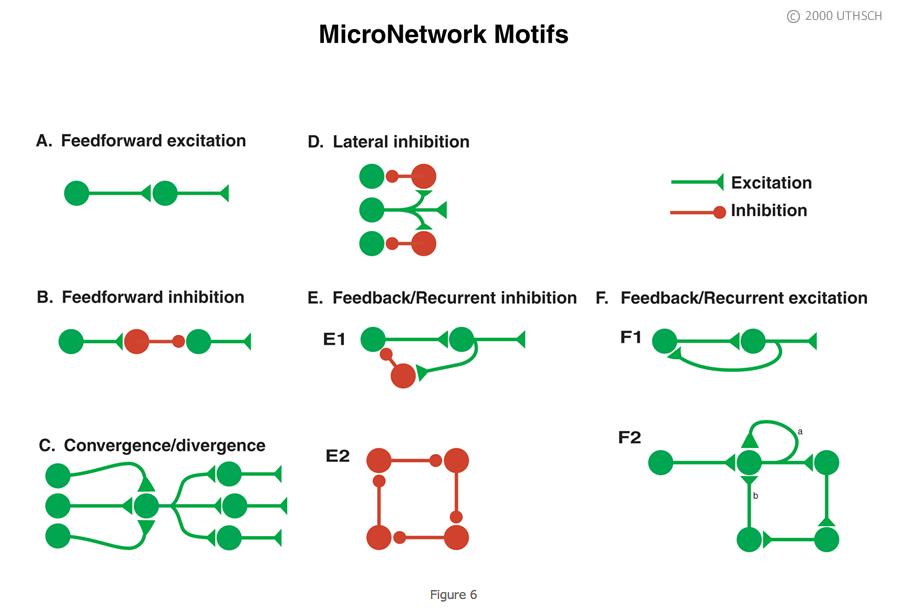

There are a handful number of these “MicroNetwork Motifs” or as Prof. Byrne puts it, a fancy word to describe a repeating motif in how neurons connect to each other.

Some of these circuits seem very familiar to people with an engineering background

Courtesy of The University of Texas Health Science Center at Houston (UTHealth)

While at first glance it may not be entirely obvious, but you could take a guess on where you could find such motifs in our body functions.

- A feedforward excitation seems straightforward but when paired with a feedforward inhibition, you get the scenario where a doctor uses those lil hammer thingies to tap a tendon on your knee and whooooop goes your knee extending forward almost involuntarily. The feedforward network excites the contraction of the Quadriceps and inhibits the flexing of the Hamstring to cause your leg to extend.

- A recurrent inhibition system could be thought of a cyclic patterned behavior like breathing where the inhibition causes a particular process like breathing in to stop and we breathe out, which is subsequently inhibited again and the cycle continues. It’s extremely important for this to be involuntary and reliable because thats what gets you killed. K?

But the most fascinating thing about it all is how the Lateral Inhibition network operates and an interesting example of it, is this optical illusion below.

You’ve must've seen a lot of these images which trick your brain into seeing something different from what's on screen. It started out on email forward chains and the same images to this date make it into YouTube compilations titled “961 Optical Illusions that will blow your mind 🤯😱”.

What's common amongst most of these images is that they all use some sort of ‘Neuro-Visual’ trickery to get you to think “Everything I see is a lie 🥴" or "How can mirrors be real if our eyes aren't real 👁"

Courtesy of The University of Texas Health Science Center at Houston (UTHealth)

Take a look at the image above and try to focus on the area that divides the two grey blocks.

You can see the block on the left appears MUCH Darker at the junction.

And in the same way the block on the right appears to be MUCH Lighter right at the centre.

It’s not a bug.

It's a feature that our brains developed called “Edge Enhancement” and its the single most important survival skill that our brains evolved to have

The brain, essentially, is carving a path where the two edges meet and it's enhancing the brightness in the area of separation between the two objects. This enhancement is absolutely crucial to our survival as a species because it allows us to detect where objects end and where another object begins. It helps us in gauging empty/missing spaces and filled ones. It's how our hand knows exactly how much it needs to open up and curl into a claw to grab that bottle of water.

Imagine walking around in the middle of the night on pitch-dark street.

You can kinda-sorta-maybe make out some hazy edges of the road and the sidewalk.

And in extreme cases, a cliff.

The way we find edges of objects in weird lighting conditions is thanks to Edge Enhancement.

You can thank your brain for not letting you walk off a cliff,

even though you tell your friends you’ll do exactly that whenever

you read a dumb tweet on Twitter. Ok but how does our brain do this?

Lateral Inhibition

I’ll try to summarize Prof. Byrne's explanation of this phenonmenon in his lecture.

Essentially if we strip all the complexities away, and grossly over-simplify everything :-

- The photo-receptors in our eyes allow us to detect illumination.

- And they send signals back to the neurons that indicates how bright the light was.

- Let’s say we can range these values on an integer scale lets say 0 to +100 ranging from dark shades of grey to lighter ones with 100 being white.

- We can theoretically measure and compute what the brain sees when it sees the two grey blocks.

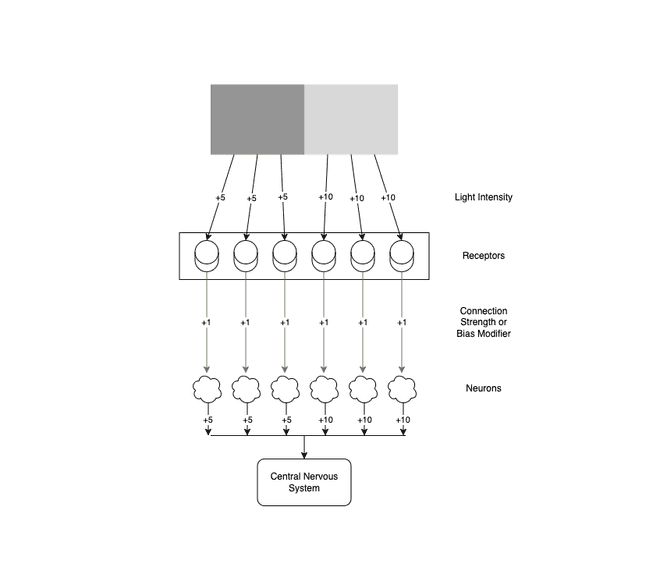

Here’s what that’d look like

Courtesy of Yours Truly. Yes I made this in Draw.io.

If we simplify further and assume the Light Intensity of our colors to be +5 for the Grey Block and +10 for the Lighter Grey Block and we can assure both blocks are equally illuminated

- The photoreceptors receive these values and transmit them across to the neurons

- Each of these connections (represented in green arrows) have their own biases or “strength” which alter the end value by a constant multiplier

- And since these are Excitator Neurons, let’s assume a value of +1

- So by the time the Light intensity value reaches the neuron, the end result is an intensity of +5

The logic follows for the lighter block in a similar way with the neurons receiving a value of +10 based on the intensity.

But this is not how our eyes and brain actually work. If the values across the blocks were uniform then we wouldn't see a darker edge and a ligher edge at the center.

The behavior that we see IRL can be explained if we apply the concept of Lateral Inhibition for these neurons (why should we? because science)

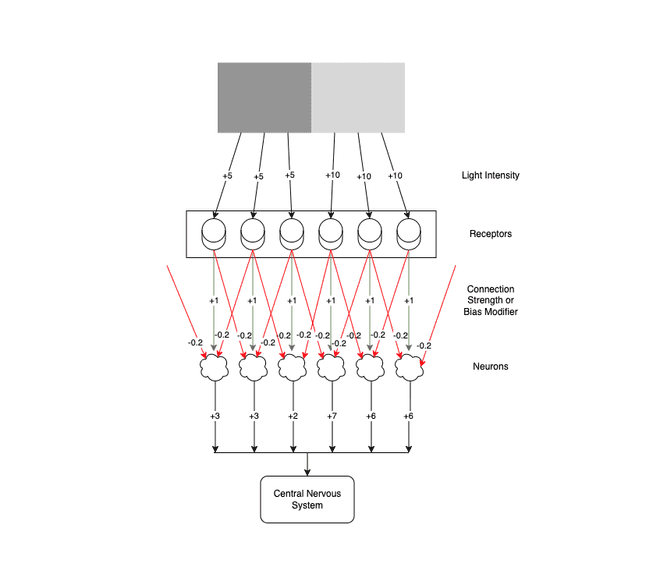

Here’s what it looks like with Lateral Inhibition

Courtesy of Yours Truly. Yes I made this in Draw.io too.

Alright this looks messy. Just tell me what’s changed

We still have the same receptors and the same neurons but each receptor also additionally connects to its neighbouring neuron and inhibits them. Those connections are represented by the red arrows. And these connections bring down the total value of the excitation by inhibiting some of it. Think of this as brakes on a car (thanks Prof)

It'd be understandable if the neurons would apply brakes on their own excitations when they deem it excessive, but what's happening here is they are impeding neighbouring neurons' excitations.

Why do these connections exist?

Neural Circuits are in a constant state of tug-o-war with each neuron activation changing the initial input by some variance. How these circuits are connected and how the synpatic connections behave are completely beyond this post but you can think of it as a restaurant with each neuron acting as a worker assigned to a station. To make a pizza,

- Flour is made into a dough by one worker

- Meanwhile another starts working on the sauce

- Once you have both the dough and the sauce ready, you have a chef bring together the dough the sauce and put on the cheese

- Then you hand it off to another worker that mans the oven where it gets baked

- And eventually the finished pizza is handed off to the waiter who brings it to the customer

- And then the customer consumes the pizza, which was ultimately flour, tomatoes, and cheese in the beginning.

Now imagine one of the workers overkneads the dough or oversalts the tomatoes that go in the sauce or adds too much cheese to the pizza.

The next worker who receives the pizza in the assembly line makes corrections to this mistake by leavening the dough more or fixing up the sauce or removing the extra cheese. These corrections can be done at any stage and by any worker.

Our neural circuitry is a billion restaurant workers and chefs constantly fixing each others pizzas all the time. Without their work, all our pizzas will be oversalted, overbaked, soggy, dense etc etc.

Okay back to the science

With these inhibitor neuron connections in place, the neurons get multiple values of varying amplitude.

Some tell them its illuminated to +5 levels and others tell it to reduce the illumination by -2.

And the neurons gotta take in all the inputs add up these values and send it to the CNS to tell the brain what the sensed illumination was so the Brain can then construct an image of what the blocks look like

And the summation goes something like this

-

For receptors on either sides of the far end who are surrounded by similiar neighbors the total intensity is as follows

-

For the darker receptors is

(+5 X -0.2) + (+5 X +1) + (+5 X -0.2) = +3 -

For the lighter receptors is

(+10 X -0.2) + (+10 X +1) + (+10 X -0.2) = +6

-

-

For receptors in the middle who have a neighbouring receptor perceiving a different intensity of light we have the total intensity to be

-

For the darker receptors

(+5 X -0.2) + (+5 X +1) + (+10 X -0.2) = +2 -

For the lighter receptors

(+5 X -0.2) + (+10 X +1) + (+10 X -0.2) = +7

-

Now that’s interesting.

And that perfectly explains why we see

- the edges to be enhanced for the lighter side (

+7vs+6normally ) - the edge to be dimmer for the darker side (

+2vs+3normally )

This is in part how Recurrent Neural Networks and Convolutional Neural Networks learn to identify edges in capturing visuals for e.g.

An Autonomous Self-Driving Car learning to identify where the road ends and the sidewalks begin and where a car in front starts and where there is empty space between cars and such.

This is just one of many ways studying the brain has made our Artificial Intelligence systems that much smarter and to break the glass ceiling for furthering advanced techniques, we gots to look at our brainz.

Now if that doesn’t qualify as Pog, I’m not sure what can.